“Digital transformation in manufacturing demands a more strategic, value-driven approach to cloud services. Without a new approach, manufacturing businesses will struggle to tackle complex technology-powered initiatives in the future.”

Why Do CAD Workloads Need a Different VDI Approach?

Modern CAD applications (SolidWorks, CATIA, Siemens NX, Creo) use OpenGL or DirectX to render 3D geometry on the GPU. Navigating a large assembly requires 30–60 FPS with minimal latency; running a CFD simulation requires sustained GPU compute over hours. The three critical constraints are:- GPU VRAM — large assemblies need 4–16 GB. Exceeding available VRAM forces fallback to system RAM, producing the stuttering that makes engineers reject VDI. This is the #1 cause of poor CAD VDI performance.

- Input-to-display latency — CAD users notice latency above 30ms when rotating assemblies. Session encoding compression directly impacts this.

- ISV certification — Dassault, Siemens, Autodesk, and PTC maintain certified VDI configuration lists. Uncertified setups risk ISV support refusal on rendering bugs. NVIDIA’s vGPU certification program is the industry reference; OCI A10/A100 GPU instances are certified. Verify versions against Dassault’s certification portal.

“Since work that was typically done by the CPU is offloaded to the GPU, the user has a much better experience. This enables support for demanding engineering and creative applications in a virtualized and cloud environment.”

Which GPU Virtualization Architecture Should You Choose for CAD VDI?

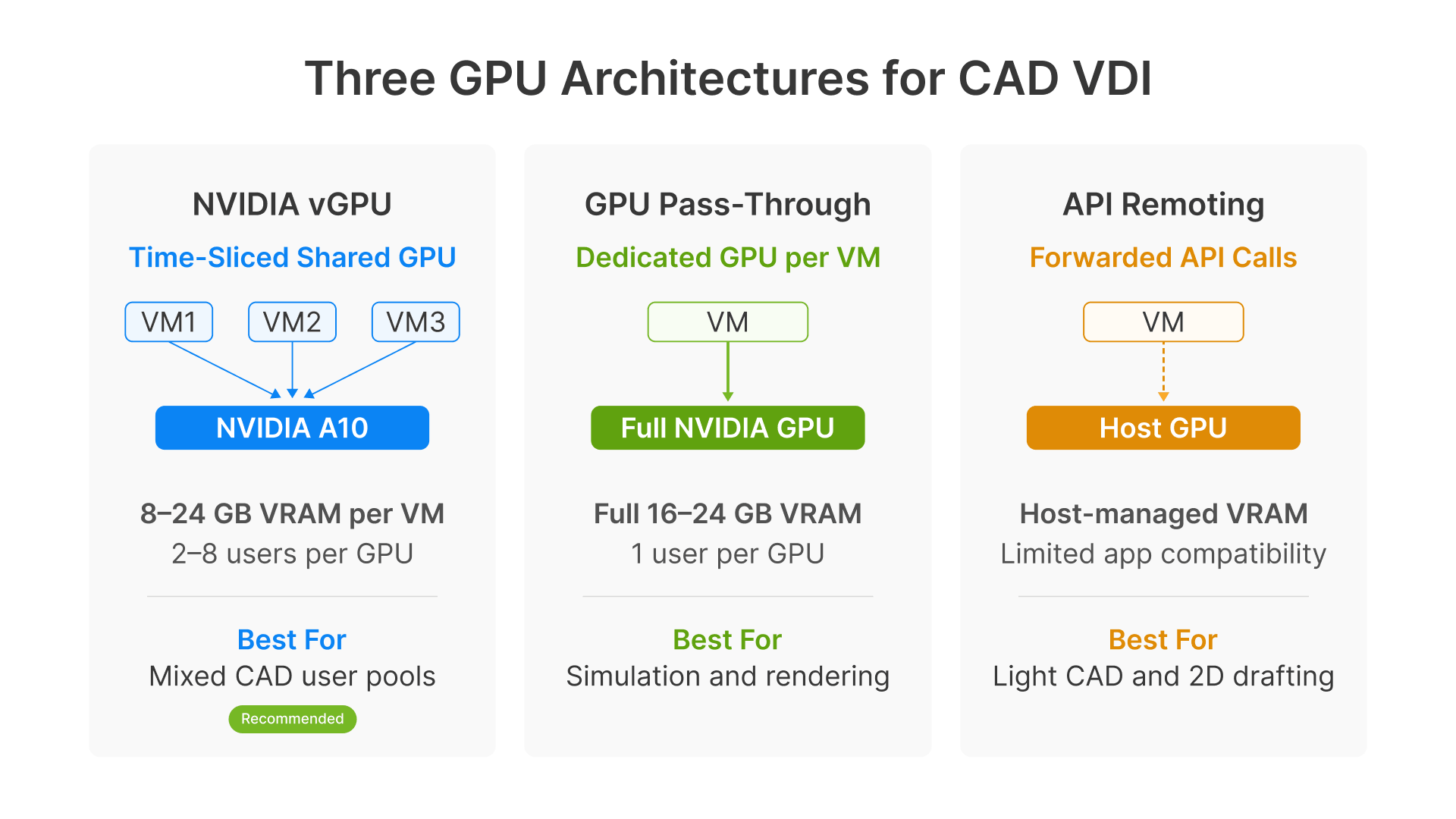

There are three fundamentally different ways to deliver GPU acceleration to a cloud VDI session for CAD engineering workloads. Each has a different performance profile, cost model, and operational complexity.

NVIDIA vGPU (Shared GPU)

NVIDIA vGPU (Virtual GPU) is a time-slicing technology that shares one physical GPU across multiple VMs. VRAM is partitioned per VM in configurable profile sizes. This requires NVIDIA vGPU software licensing (per GPU, subscription basis). It delivers full OpenGL and DirectX support with certified drivers. Best for mixed CAD user populations where not every user needs maximum GPU at the same time. On OCI, the BM.GPU.A10.4 shape supports vGPU profiles up to 24 GB per VM, serving 2 to 8 CAD users per GPU depending on profile size.GPU Pass-Through (Dedicated)

A full physical GPU is dedicated to a single VM exclusively — no sharing, no overhead. Maximum performance for single-user engineering workstations, but at a 1:1 GPU-to-VM ratio, it’s expensive at scale. No vGPU license required. Best for simulation analysts, rendering workloads, and the highest-demand CAD users. On OCI, use VM.GPU3.1 (1x V100) or VM.GPU.A10.1 (1x A10).API Remoting

OpenGL/DirectX API calls are forwarded from the guest VM to the host GPU. No VRAM partitioning — the host handles rendering. Lower per-user cost than vGPU, but compatibility is limited and not recommended for complex 3D assembly work with large VRAM requirements in manufacturing CAD. Best for lighter CAD workloads, 2D drafting, and visualization-only use cases.| Recommendation for manufacturing: Start with NVIDIA vGPU on OCI A10 shapes for most CAD users. Add dedicated pass-through shapes (VM.GPU3.1 or VM.GPU.A10.1) for simulation analysts who saturate a full GPU. A hybrid pool — vGPU for standard users, pass-through for power users — is the most cost-effective production configuration for manufacturing engineering VDI. |

|---|

Which OCI GPU Shape Should You Choose for CAD Engineering?

Oracle Cloud Infrastructure offers several GPU-equipped instance shapes for cloud workstation manufacturing engineering. Selecting the right one means matching VRAM, compute capability, and cost to each engineering user persona. As Oracle states, OCI Compute powered by NVIDIA GPUs provides consistent high performance for VDI — and this applies directly to CAD VDI GPU workloads in manufacturing.| OCI Shape | GPU | VRAM | Manufacturing CAD Use Cases |

|---|---|---|---|

| VM.GPU.A10.1 | 1x A10 | 24 GB | Standard CAD users: SolidWorks mid-size assemblies, AutoCAD 3D, Inventor. Recommended baseline for most engineers. |

| VM.GPU.A10.2 | 2x A10 | 48 GB | Power CAD: CATIA V5/V6 large assemblies, Siemens NX complex surfaces, concurrent CAD + simulation sessions. |

| BM.GPU.A10.4 | 4x A10 | 96 GB | vGPU host for user pools: partition into 4–8 profiles for concurrent multi-user sessions. Most cost-efficient for shared pools. |

| VM.GPU3.1 | 1x V100 | 16 GB | FEA/CFD simulation: Ansys Mechanical, COMSOL. Higher FP64 compute for simulation-heavy workflows. |

| BM.GPU4.8 | 8x A100 | 320 GB | Large simulations, ML/AI, rendering farms. Not typical for interactive CAD — use for batch processing. |

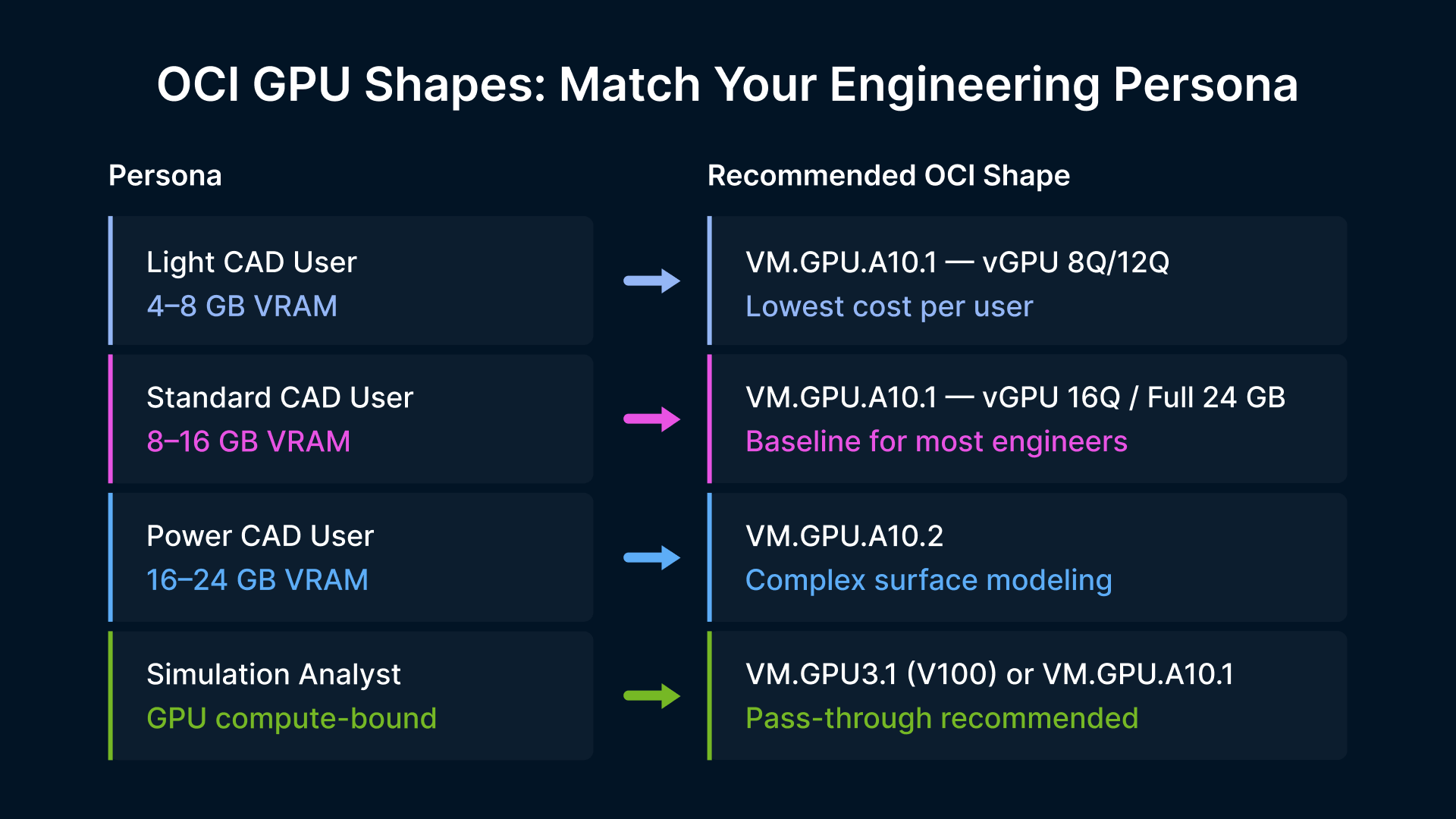

How Should You Size GPU Shapes by User Persona?

The most common mistake in CAD VDI is sizing GPU shapes for the average workload instead of the peak. Define your engineering workstation VDI GPU personas first:

The most common mistake in CAD VDI is sizing GPU shapes for the average workload instead of the peak. Define your engineering workstation VDI GPU personas first:

- Light CAD users (2D drafting, small assemblies under 200 components): 4–8 GB VRAM. Use VM.GPU.A10.1 with NVIDIA vGPU 8Q or 12Q profile, shared across 2–3 users.

- Standard CAD users (full 3D assembly, up to 500 components, rendering): 8–16 GB VRAM. Use VM.GPU.A10.1 with dedicated 24 GB or vGPU 16Q profile.

- Power CAD users (1,000+ components, CATIA DMU, complex surfaces): 16–24 GB VRAM. Use VM.GPU.A10.2.

- Simulation analysts (Ansys, Abaqus, COMSOL — GPU compute-bound): Use VM.GPU3.1 for FP64 workloads or VM.GPU.A10.1 for FP32 with large visualization.

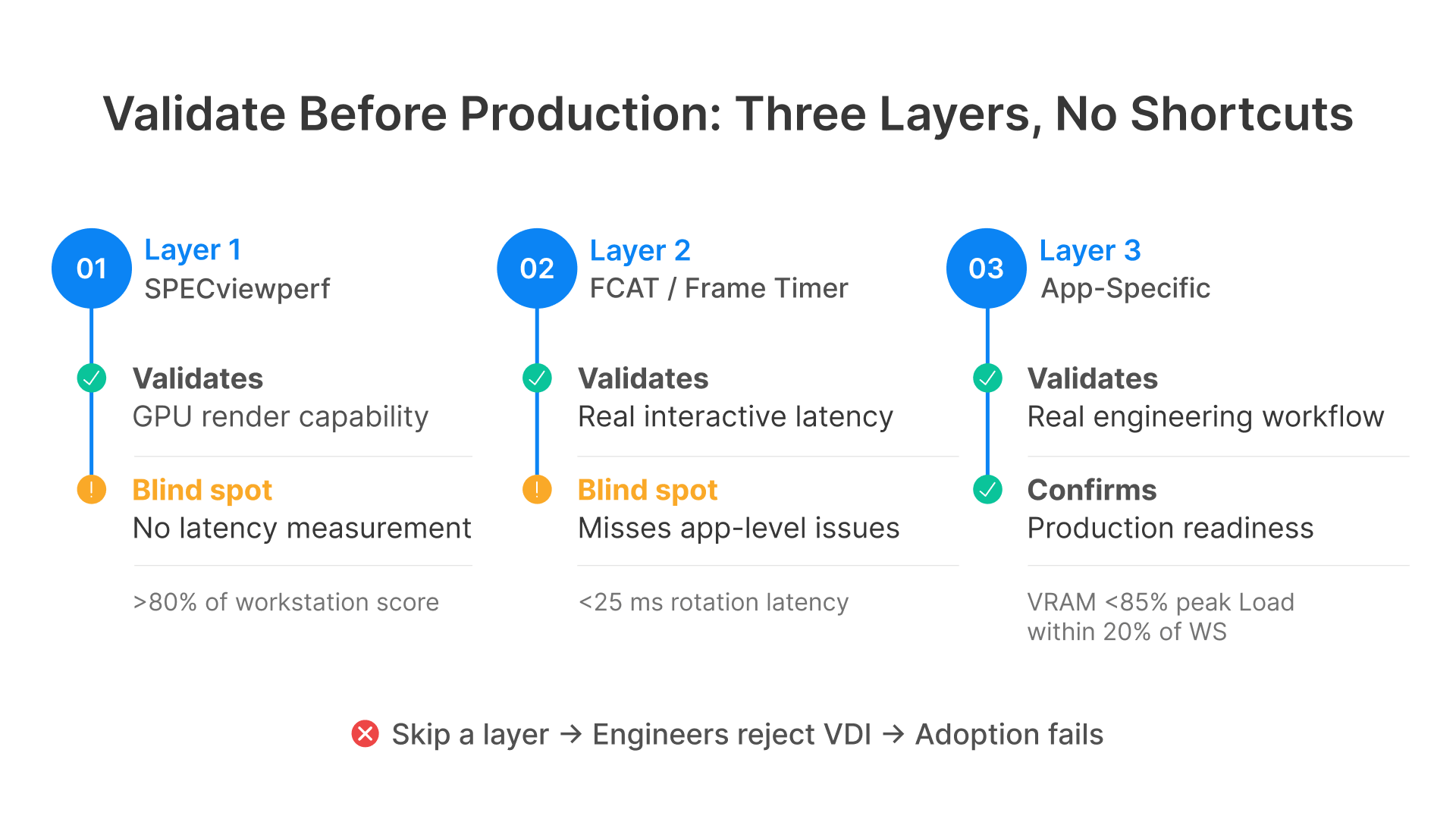

How Should You Benchmark CAD VDI Before Production Rollout?

Deploying GPU VDI for CAD without structured performance validation is the fastest way to create engineering team resistance that’s very hard to reverse. Engineers who have a bad pilot experience will resist adoption regardless of later improvements.| For a broader view of the manufacturing VDI modernization journey, see Manufacturing VDI Modernization: On-Premises, Hybrid, and Cloud Infrastructure Guide. |

|---|

SPECviewperf: The Industry Standard CAD Graphics Benchmark

SPECviewperf is the worldwide standard for measuring graphics performance based on professional applications, published by the Standard Performance Evaluation Corporation (SPEC). It measures OpenGL rendering performance using workloads (called viewsets) derived from real CAD applications including Creo, SolidWorks, CATIA, Siemens NX, and ANSYS — without needing to install the applications themselves. As a practical target: an OCI VM.GPU.A10.1 running the SolidWorks (sw-06) workload should score within 80 to 90 percent of a comparable physical workstation with an NVIDIA RTX A4000 GPU. If scores fall short, investigate GPU driver version, vGPU profile configuration, and session encoding offload.FCAT: How Do You Measure Real Interactive Latency?

SPECviewperf measures raw rendering throughput but not end-to-end session latency. FCAT (Frame Capture Analysis Tool), developed by NVIDIA, captures actual frame delivery timing in real-time sessions. For CAD VDI acceptance, measure latency during three tasks: assembly rotation (target below 25ms average), zoom-to-fit on large assembly (below 35ms), and 2D annotation (below 10ms).What Application-Specific Tests Should You Run?

Beyond synthetic benchmarks, run these application-level tests during your engineering workstation VDI GPU pilot:- Large assembly load time: open your three largest production assembly files and measure time to full load. Target: within 20% of physical workstation.

- Assembly rotation consistency: with the largest assembly open, perform continuous rotation for 60 seconds. Frame rate drops below 15 FPS are noticeable. VRAM exhaustion during this test signals you need a bigger shape.

- Multi-application concurrent use: open CAD + PDM/PLM client + PDF reader simultaneously. Many CAD sessions run 4–6 applications — single-app benchmarks underestimate real VRAM needs.

- Print and PDF export: test large spooling operations. Printing in VDI is frequently worse than physical workstations without specific virtual printer configuration.

| Metric | Tool | Target | Why It Matters |

|---|---|---|---|

| OpenGL throughput | SPECviewperf | >80% of workstation | Baseline GPU shape adequacy |

| Frame latency | FCAT | <25ms avg rotation | Primary UX determinant for interactive CAD |

| VRAM utilization | nvidia-smi / Datadog | <85% peak | Headroom before performance degradation |

| Encoding quality | Thinfinity stats | No artifacts on edges | Perceived resolution of engineering geometry |

| Assembly load time | Manual timing | Within 20% of desktop | Daily productivity impact |

| Bandwidth/session | Datadog / OCI metrics | <25 Mbps average | Concurrent session capacity planning |

Which Codec Works Best for CAD VDI Sessions?

Session encoding — compressing rendered GPU frames before sending them to the user — is where most CAD VDI deployments in manufacturing get into trouble. The wrong codec produces visible compression artifacts on fine engineering geometry, reduces frame rates, or consumes too much bandwidth.H.264, H.265, and AV1: the Tradeoffs

H.264 (AVC) is the legacy default — universally supported, low latency, but introduces visible artifacts on CAD wireframes and dimension text at moderate bitrates. H.265 (HEVC) delivers equivalent visual quality at 40–50% lower bitrate. Cleaner geometry at the same bandwidth, but older thin clients may not support hardware decode. AV1 offers 30–50% better compression than H.265, royalty-free licensing, and hardware encoding support on NVIDIA A10 GPUs. Endpoint support is improving but not yet universal. AV1 is the codec to evaluate for new cloud workstation manufacturing engineering deployments targeting modern endpoints.How Much Bandwidth Does Each CAD Scenario Require

2D drafting: 1–5 Mbps per session. 3D assembly navigation (interactive): 10–25 Mbps during active rotation — this is when users are most sensitive to quality. Photorealistic rendering: 5–15 Mbps. FEA/CFD result visualization: 8–20 Mbps during animation playback. For a manufacturing engineering team of 50 users with 80% concurrency, plan for 40 concurrent sessions at 20 Mbps each — 800 Mbps total. OCI’s free egress (10 TB/month per region) covers data transfer cost; the constraint is your WAN connectivity to OCI.What Production Tools Do You Need for GPU CAD VDI?

NVIDIA vGPU Software: The License Behind Certified GPU Virtualization

For multi-user GPU pools using NVIDIA vGPU (shared GPU across multiple VMs), NVIDIA vGPU Software licensing is required. For CAD VDI in manufacturing, NVIDIA RTX vWS (Virtual Workstation) is the appropriate tier — it’s the only one that provides certified OpenGL support for professional CAD applications. On OCI, vGPU licensing may be incorporated into GPU instance pricing for certain shapes. Compare the combined cost (OCI GPU shape + vGPU license + Thinfinity) against physical engineering workstations over a 3-year horizon. For a detailed TCO comparison, see Total Cost of Ownership: On-Prem VDI vs Cloud VDI in Manufacturing.Thinfinity RDC and VNC: How Session Delivery Works for CAD

Thinfinity delivers CAD engineering sessions over two core protocols: Thinfinity RDC (Remote Desktop Connection) for Windows GPU session hosts running SolidWorks, CATIA, NX, AutoCAD, and Ansys; and Thinfinity VNC (Virtual Network Computing) for Linux-based engineering workloads like MATLAB, FreeCAD, and OpenFOAM. Both are accessible from the same Thinfinity portal with one authentication flow. RDC captures rendered frames via NVIDIA’s NVENC (NVIDIA’s dedicated hardware video encoder) and transmits over encrypted WebSocket to the user’s browser — no client install needed. NVENC offloads all compression from the CPU to the GPU’s dedicated encode engine, so the GPU compute allocated for CAD rendering isn’t throttled by encoding overhead.FSLogix: How to Handle Large CAD User Profiles

FSLogix Profile Containers (Microsoft’s profile container technology for VDI) handle profile roaming for cloud VDI sessions, but CAD-specific caches — SolidWorks FeatureWorks, CATIA CGR files, NX JT geometry — can reach tens of gigabytes and should be excluded from roaming. They regenerate from source files. Similarly, PDM/PLM local caches (Teamcenter, Windchill, Vault) should be redirected to a fixed local path. Without these exclusions, FSLogix containers grow to 20–50 GB per user, causing mount-time performance issues and storage cost overruns in your CAD VDI GPU environment.Datadog: How to Monitor GPU Session Hosts

Datadog’s NVIDIA DCGM integration (Data Center GPU Manager) collects GPU metrics from OCI session hosts: GPU utilization, VRAM usage, temperature, and encoder utilization. Key dashboards for manufacturing CAD VDI operations: VRAM utilization per host (alert at 85%), GPU encoder utilization (above 90% means encoding-bound — increased latency for new sessions), and session count per shape. Layer Thinfinity session metrics on top for the complete picture of your engineering workstation VDI GPU performance.How Does Thinfinity Deliver GPU-Accelerated CAD on OCI?

Adaptive Session Encoding for Manufacturing CAD

Thinfinity uses NVENC hardware encoding on all OCI A10 and V100 GPU shapes. It supports H.264 and H.265 with three configurable quality profiles: adaptive quality (adjusts to network conditions), quality-optimized (best fidelity at higher bandwidth), and bandwidth-optimized (for constrained links). Encoding parameters are configurable per user pool in Thinfinity Cloud Manager — power CAD users on fast connections get different policies than remote engineers on slower links.Shift-Aligned Autoscaling for Engineering Teams

Thinfinity Cloud Manager provisions OCI GPU shapes as session host pools and applies autoscaling based on session count, schedules, or custom triggers. For manufacturing engineering teams with predictable shifts, configure pre-provisioning 10–15 minutes before shift start (VM.GPU.A10.1 starts in 2–4 minutes). This eliminates cost of running GPU instances during nights and weekends — a critical advantage for controlling cloud workstation manufacturing engineering costs.Multi-Monitor and Peripheral Support

CAD engineers typically need multiple monitors — a primary for the 3D viewport and a secondary for the feature tree. Thinfinity supports multi-monitor configurations with configurable resolution per display (typically 2560×1440 or 3840×2160). Use H.265 encoding for high-resolution sessions. For peripherals, Thinfinity supports USB redirection for 3D Connexion SpaceMouse devices (via USB HID), Wacom tablets, and USB software licensing dongles. For dongle-dependent applications, deploying a network license manager (FlexLM, DSLS) is the recommended long-term approach for cloud VDI.Thinfinity RBI on OKE: Web SCADA Access for Engineers

Engineers who use SolidWorks also need access to web-based SCADA interfaces, historian platforms (PI Vision, AVEVA Insight), and MES dashboards. Rather than running these browser apps in a GPU RDC session, Thinfinity RBI (Remote Browser Isolation) on OCI Kubernetes Engine (OKE) spins up ephemeral browser containers — isolated, secure, and destroyed when closed. All from the same portal. OKE node pools for RBI are standard CPU shapes (no GPU needed), sized separately from the GPU pool. This combination — RDC for CAD on GPU shapes, VNC for Linux tools, RBI on OKE for web SCADA — gives manufacturing engineering teams one portal covering their entire working environment. No VPN, no multiple tools, one authentication, one audit trail.Key Takeaways

Beyond synthetic benchmarks, run these application-level tests during your engineering workstation VDI GPU pilot:

- Start with NVIDIA vGPU on OCI A10 shapes. Add pass-through for simulation analysts.

- Size by peak workload per persona, not average.

- Benchmark three layers: SPECviewperf + FCAT + real app tests. No shortcuts.

- Use H.265 with NVENC offload. Evaluate AV1 for modern endpoints.

- Shift-aligned autoscaling cuts GPU costs 35–50%.

- Thinfinity on OCI: RDC + VNC + RBI = one portal, Zero Trust.

Frequently Asked Questions

Can SolidWorks and CATIA run in cloud VDI with ISV support?

How does OCI latency affect CAD sessions?

10–30ms round-trip from major metro areas — within the 30ms threshold. For 50ms+ locations, consider on-premises GPU hosts under a hybrid Thinfinity architecture.

Cloud GPU VDI vs physical workstations: what’s cheaper?

How does Thinfinity handle different GPU needs per user?

Separate session host pools per GPU configuration — standard (vGPU 16Q), power (full 48 GB A10.2), simulation (VM.GPU3.1 FP64). Users assigned by AD group or Thinfinity RBAC.