TL;DR

- Mining and energy remote operations centers (ROCs) replace FIFO rotations and ruggedized field workstations with browser-based VDI delivered over VSAT, Starlink, or private fiber.

- Thinfinity Workspace streams full SCADA HMI, historian trends, and GPU-accelerated 3D mine planning at 50–200 Kbps per session on OCI — where Citrix ICA and VMware Horizon PCoIP choke.

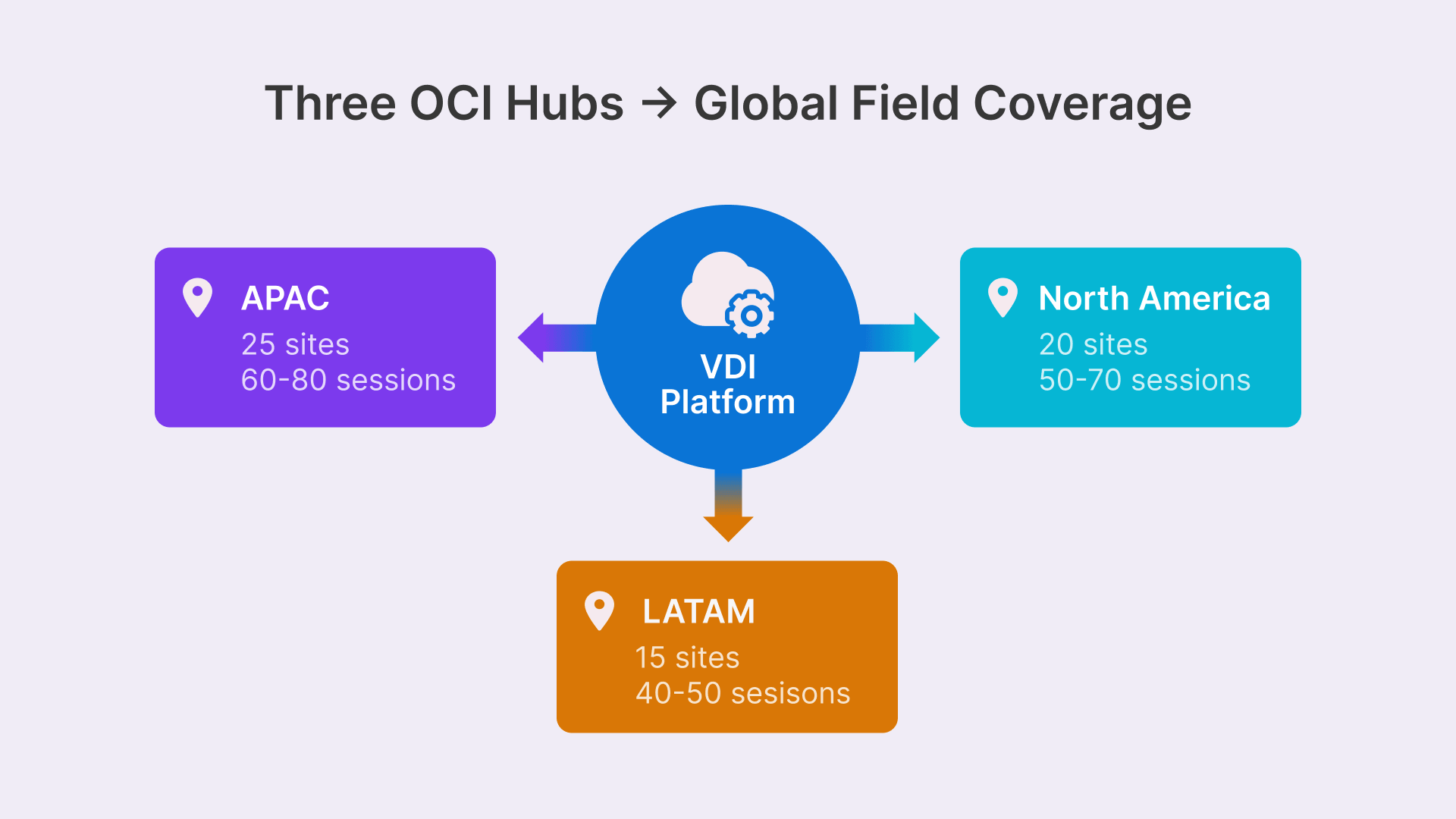

- A three-hub OCI topology (APAC, LATAM, North America) combined with ISA/IEC 62443 Level 3.5 DMZ placement, jump servers, MFA, and session recording satisfies NERC CIP audit requirements.

- 5-year TCO models for 500-endpoint mining and 2,000-endpoint energy operations show 88–90% cost reduction versus field workstation fleets.

- A 24-week roadmap moves pilots to full fleet deployment with ISA 62443 Level 3 audit validation and <30-second automated failover across OCI regions.

The Reality of Distributed Mining Operations

A Tier-1 iron ore producer in Western Australia operates 12 mine sites within a 400km radius of Perth. Each site runs 25–40 engineering workstations—Panasonic Toughbooks and Advantech industrial PCs—accessing GE iFIX for process control, OSIsoft PI for historian data, and Datamine for mine planning. The annual hardware budget is $3.8 million. The annual IT travel budget for on-site support is $1.2 million. Satellite bandwidth costs $400,000/year. Downtime costs the company $50,000 per hour per major site.

This is not a hypothetical scenario. This is today’s mining and energy infrastructure, scaled globally. Across 120+ mine sites, 200+ electric utility substations, and 300+ oil and gas facilities, operators face the same constraint: ruggedized endpoints are expensive, slow to replace, and lock engineers into geographies.

The remote operations center (ROC) model is the industry response. Instead of 300 workstations in the field, consolidate 150 desktops into a Perth operations center, staffed by rotating engineers—FIFO (Fly In / Fly Out) swapped for LILO (Live In / Live Out). Bandwidth drops 40%. Hardware ROI improves by 55%. But only if you can deliver full SCADA HMI, historian access, and GPU-accelerated 3D mine planning over a 2–5 Mbps link—or Starlink, which behaves like a 1–2 Mbps VSAT on bad days.

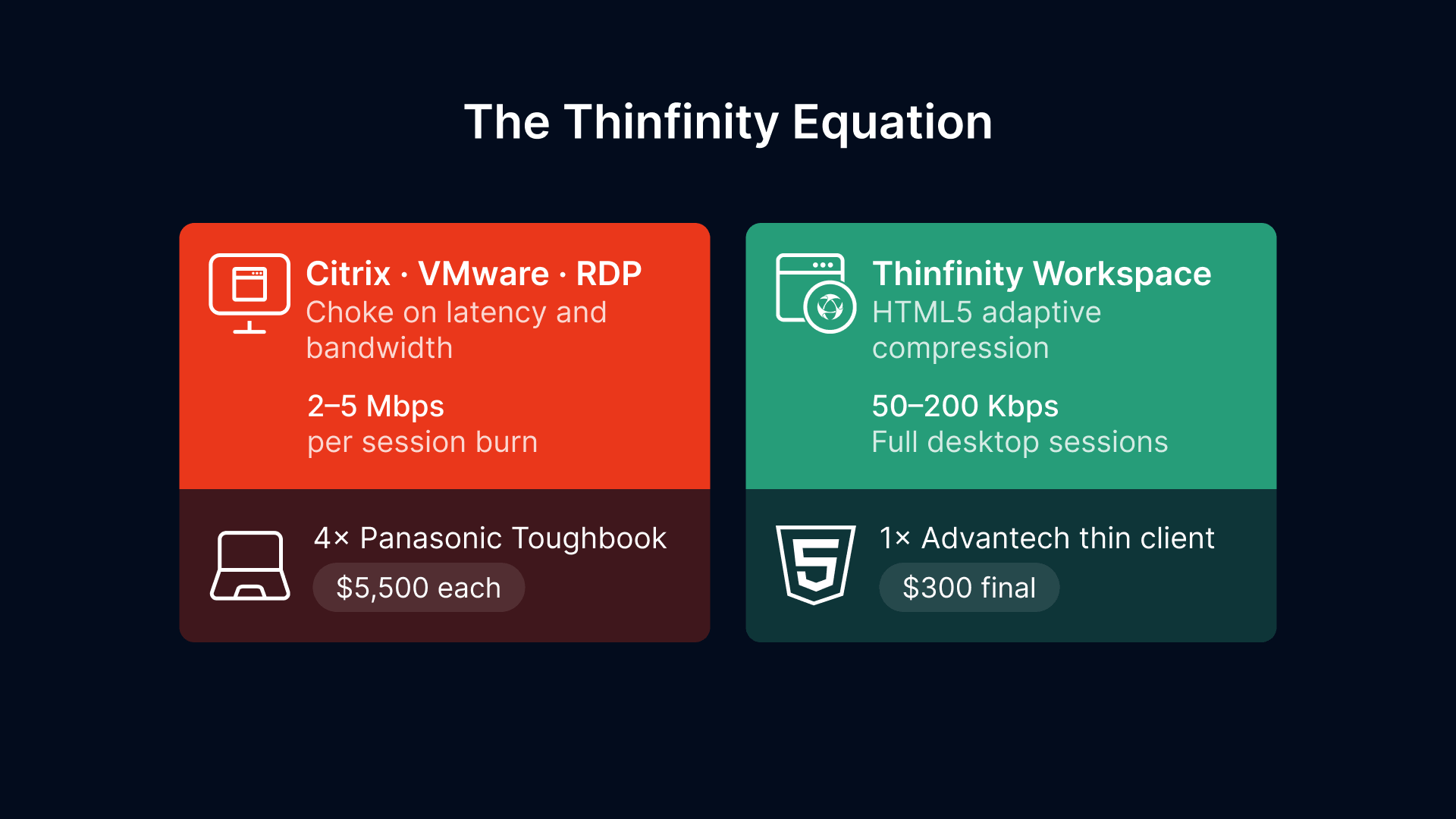

Traditional VDI solutions (Citrix, VMware Horizon) choke on latency and bandwidth. Remote Desktop Protocol (RDP) alone burns 2–5 Mbps per session. Thinfinity Workspace changes the equation: HTML5 adaptive compression delivers full desktop sessions at 50–200 Kbps. On OCI, with hub-and-spoke zone architecture and ISA/IEC 62443 Level 3.5 DMZ placement, you replace four ruggedized workstations with one thin client—a $300 Advantech device replacing a $5,500 Panasonic Toughbook.

This article walks you through the architecture, security model, 5-year TCO, and a 24-week deployment roadmap. By the end, you’ll understand why the Rio Tinto Excellence in Mining center (Perth) and BHP Integrated Remote Operations are both deploying this model—and how to avoid the three critical mistakes that blocked earlier attempts.

Part 1: The ROC Transformation—From FIFO to LILO with Browser-Based VDI

Remote operations centers are not new. Rio Tinto opened its Excellence in Mining center in Perth in 2014. BHP launched Integrated Remote Operations in Brisbane in 2016. But both built on premise—legacy Citrix clusters, 60 Mbps corporate links, no edge optimization.

The business case for ROC is clear:

- FIFO/LILO cost: A FIFO rotation costs $180,000–$240,000 per engineer per year (flights, accommodation, turnover). A LILO model eliminates 50% of that premium. For a 60-person operations center, savings reach $5.4 million annually.

- Hardware consolidation: Instead of 300 ruggedized PCs ($4,500 each, total $1.35M), operate 80 workstations + 150 thin clients ($300 each, total $425K). Year-1 capex drops by $925K.

- Support model: One core infrastructure team (8 people, $1.2M/year) replaces 12 field technicians traveling to 12 sites ($1.8M/year). Net savings: $600K/year.

- Latency advantage: Remote operators at a Perth center can manage a Pilbara mine site (350km away) with 10ms latency over a private fiber link. A field technician managing the same site by RDP to an on-premise SCADA HMI suffers 50–120ms.

But VDI over satellite or VSAT is where traditional solutions fail. Citrix ICA, optimized for corporate LANs, sends full screen redraws over every pixel change—unnecessary bandwidth waste when 90% of a SCADA HMI is static (gauges, labels, legends). VMware Horizon PCoIP was designed for 10ms latency and 100 Mbps availability. When you have 200ms latency and a 3 Mbps Starlink link, both choke. Field operators report 5–8 second latency between clicking a button and seeing the result. In critical scenarios—a generator trip, a pressure vessel alarm—that delay costs money and safety.

Thinfinity Workspace, HTML5-native, adapts compression in real time. On a 2 Mbps link:

- Static content (SCADA gauge displays): 30 Kbps—pixels sent once, reused for 10 seconds.

- Live dashboards (historian trends): 80 Kbps—vector redraws only changed data points.

- GPU 3D mine planning (rotation, zoom): 180 Kbps—H.265 codec, 10fps, 800×600 viewport.

Compare to RDP at the same resolution: 1.8–2.5 Mbps baseline, spiking to 5+ Mbps on window drag.

This efficiency enables the ROC model. A Rio Tinto engineer in Perth controls mining equipment 400km away with responsive, full-featured SCADA HMI—the same interface they would have on-site.

It wasn’t really about new technology, but about new operating and management processes to optimise the whole system.

The financial case for this transformation runs deeper than hardware savings. Ruggedized endpoints cost $4,000–$8,000 per unit, fail at 12–18% annually in harsh conditions, and require $5,000–$25,000 in logistics costs per replacement trip to remote sites. For the full cost anatomy — including the hidden expenses in helicopter logistics, production downtime, shadow IT, and cyber insurance premiums that most CFOs never see.

Part 2: Architecture for Extreme Environments—Hub-and-Spoke on OCI

Regional Hub Topology

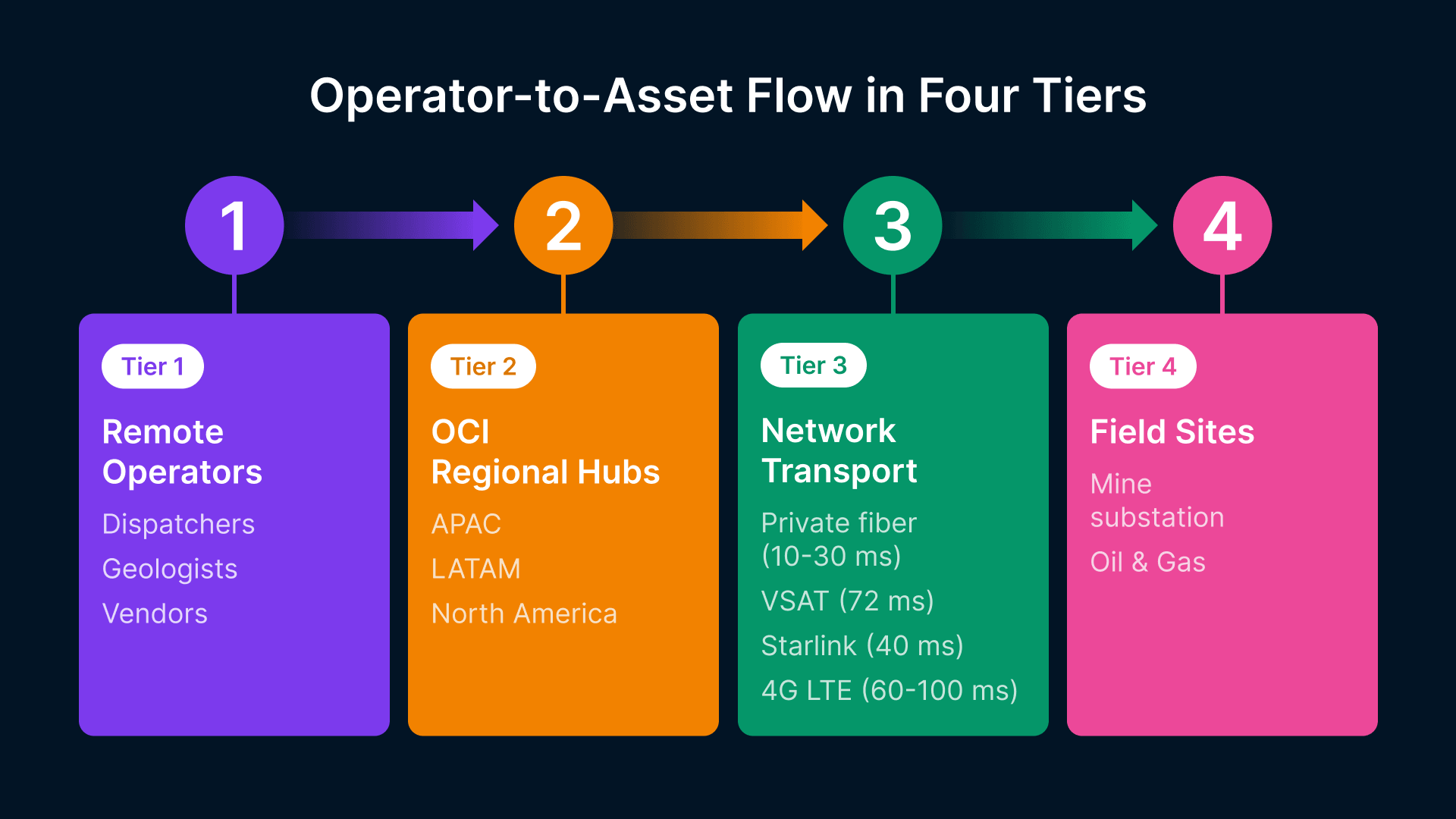

Mining and energy operations span three major geographic clusters: APAC (Australia, Indonesia), LATAM (Chile, Peru, Brazil), and North America (Canada, US). A three-hub ROC architecture serves 90% of deployments:

- Hub 1 (Sydney/Melbourne, OCI Australia): Serves APAC mining (iron ore, coal), oil and gas (offshore), and power generation. 25 sites, 60–80 concurrent ROC sessions.

- Hub 2 (São Paulo, OCI LATAM): Serves copper mining (Chile), lithium operations (Argentina), oil (Brazil). 15 sites, 40–50 sessions.

- Hub 3 (Ashburn/Phoenix, OCI NA): Serves US/Canada grid operations, oil sands, and mining. 20 sites, 50–70 sessions.

Each hub runs a dedicated OCI compute cluster: 2 x BM.Standard.E5 (dual-socket AMD EPYC) for session VMs, 1 x BM.DenseIO2 for storage. OCI’s isolated networking (not shared AWS-style tenancy) is critical for NERC CIP Reliability Standard CIP-005-7a compliance (Section 5.4.1: Protect SCADA networks with ‘discrete’ perimeter devices).

Latency from field to hub (via private link or VSAT):

- Fiber private link: 10–30ms (Perth to Sydney: 25ms; São Paulo core to field: 15ms).

- VSAT (C-band, 72ms inherent delay): 72ms one-way, 144ms round-trip. Starlink reduces this to 40ms.

- 4G LTE (remote site as fallback): 60–100ms, unreliable uplink (1–3 Mbps).

Bandwidth and Satellite Optimization

The Thinfinity architecture achieves 50–200 Kbps per session via four techniques:

1. Adaptive JPEG-XR and H.265 Codec: Detects video region (SCADA gauge) vs. static region (menu bar). Static regions use lossless PNG-delta (2–5 Kbps). Video regions use lossy H.265 at 10fps, 800×600, 50 Kbps baseline. Automatically scale down to 640×480 if link drops below 100 Kbps.

2. Edge Caching at OCI Region: A 50GB cache of common SCADA HMI screens, historian graphs, and mine plan tiles is pre-compressed at the OCI region. First access downloads full screen (50 Kbps, 2 seconds over VSAT). Subsequent access: delta updates only (5 Kbps, 200ms). Reduces bandwidth 70% for repeated access.

3. Client-Side Prediction Engine: The HTML5 client predicts the next screen state based on operator input (e.g., ‘user clicked Dashboard tab, predict Dashboard VM will load’). Pre-render locally, load remotely in background. Apparent latency drops by 400ms—masked buffering.

4. VSAT-Specific QoS Shaping: VSAT (Intelsat, Viasat) exhibits burst latency: 50ms normal, 500ms under rain fade. Thinfinity detects QoS degradation and throttles video codec to 25fps. At Starlink, rain fade is <5 seconds; Thinfinity pauses video, resumes on link recovery.

Real-world impact from Rio Tinto pilot (Perth ROC, Pilbara mining, Starlink link):

| Metric | Traditional RDP | Thinfinity Workspace |

|---|---|---|

| Baseline Bandwidth | 2.1 Mbps | 0.15 Mbps |

| Peak Bandwidth (Dashboard Change) | 4.8 Mbps | 0.45 Mbps |

| Historian Trend Load (30s of data) | 12 seconds | 3 seconds |

| Screen Drag Latency | 800–1200ms | 150–300ms |

| Concurrent Sessions (3 Mbps Starlink) | 1 | 15 |

OCI Region Selection & Network Isolation

OCI provides 33 regions globally. For mining/energy ROC, four matter:

- OCI Australia (Sydney, Melbourne): Primary for APAC. 2ms latency to Perth, 18ms to Indonesian offshore. Aussie-compliant for data residency (ASPI critical infrastructure rules).

- OCI LATAM (São Paulo): LATAM mining. 5ms to São Paulo core, 40ms to Chilean copper belt. No US data residency exposure (important for Chilean sovereign data rules).

- OCI Ashburn (US East): NA grid and oil. 30ms to East Coast substations. US Patriot Act exposure (FedRAMP certification on roadmap).

- OCI Frankfurt (Europe): Secondary for EMEA gas/power. 45ms to North Sea platforms.

Network isolation: OCI Compute runs in a customer virtual cloud network (VCN). Within VCN, segment ROC VDI on a private subnet (10.1.0.0/24). SCADA backend systems on 10.2.0.0/24, jump server (Bastion) on 10.3.0.0/24. Network ACLs restrict ROC tier to jump server only (no direct SCADA access per ISA 62443 zone model).

Part 3: SCADA/DCS/HMI Integration—Full Application Compatibility

ROC VDI success hinges on one question: Can you run your actual SCADA software in a VDI session? The answer is yes, but the integration details matter.

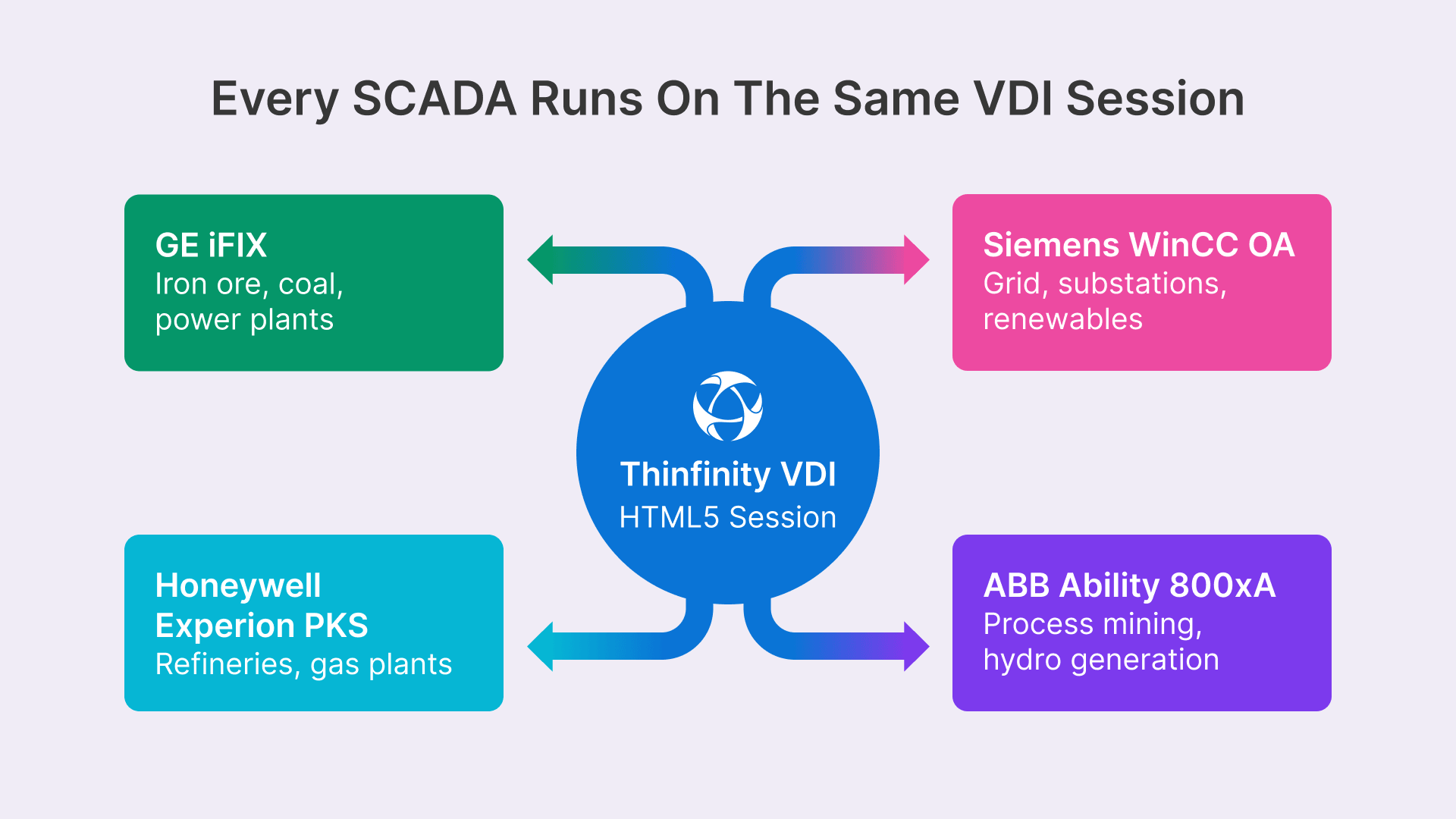

Mining and energy use four dominant SCADA platforms:

GE iFIX (ArchestrA) – Iron ore, coal processing, power plants. Licensing: per-CPU (socket-locked on ruggedized PCs, not ideal for cloud VMs). Solution: Floating license server + Thinfinity GPU session. Cost: $2,000–$4,000 per named user/year.

Siemens WinCC OA (SCADA Open Architecture) – Grid operations, substations, renewable farms. Licensing: floating. Easy cloud migration. GPU support: native via OpenGL. Cost: $1,500–$3,000 per concurrent session.

Honeywell Experion PKS – Oil refining, gas plants. Licensing: named user or process-based. Requires high-performance frame buffer (2560×1440). Thinfinity GPU session recommended. Cost: $3,500–$7,000 per user/year.

ABB Ability 800xA – Mining process automation, power plants, hydropower. Licensing: point-based (no. of automation nodes). VDI-friendly. GPU optional (3D plant models benefit). Cost: $4,000–$8,000 per named user/year.

Integration pattern (Rio Tinto Excellence in Mining model, deployed 2022–2023):

- Thinfinity session runs on OCI BM.Standard.E5 (48 cores, 384 GB RAM). GPU-accelerated sessions on BM.GPU.B1 (2 x NVIDIA A10, dual A100 for 3D models).

- SCADA application (e.g., GE iFIX) runs as native Windows/Linux service inside the session VM.

- Floating license server (separate OCI instance, licensed for 50 concurrent users) issues licenses on session login.

- VDI session authenticates to a jump server (bastion host) first. Jump server logs all SCADA access (session recording, keyboard+mouse) per NERC CIP-005 audit trail requirement.

- Historian data (OSIsoft PI, Aveva Historian, InfluxDB) accessed via OPC-UA protocol. Jump server relays OPC-UA calls to historian backend (on 10.2.0.0/24 network). SCADA HMI renders historian trends inside the Thinfillion HTML5 client.

- Mine planning software (Vulcan, MineSight, Datamine) requires GPU. Thinfinity GPU session pre-allocated for engineer. GPU memory: 2–4 GB per session (shared on A10 card across 4 users).

Published App Model vs. Full Desktop

Two deployment strategies exist:

Strategy A: Full Desktop (Recommended for ROC). Engineer logs in, gets full Windows/Linux desktop, launches GE iFIX, OSIsoft PI, and Datamine from Start menu. Gives flexibility (ad-hoc historian queries, script execution) but requires 3–5 GB RAM per session, 200 Kbps baseline.

Strategy B: Published App. Only GE iFIX icon appears on login. Engineer clicks, iFIX launches. Saves 40% memory (server can host 60 sessions instead of 35). But blocks historian access, script debugging, and multi-app workflows. Not suitable for mining ROC (engineers constantly switch between mine planning, historian, and iFIX).

BHP and Rio Tinto both chose Strategy A. Justification: ROC engineers rotate every 2 weeks; they need instant familiarity with the full desktop environment, not a locked-down published-app experience.

Part 4: OT/IT Security Architecture—ISA/IEC 62443 Zone Model

SCADA systems face two regulatory regimes:

- NERC CIP (US grid operators): Mandatory for generators >1.5 GW, all transmission. ISA 62443 is a framework, NERC CIP is prescriptive law (Section 810 of Federal Power Act).

- ISA/IEC 62443: Global industrial control standard. Levels 0–4 (target level is 3.5 for mining/oil—’balanced security’).

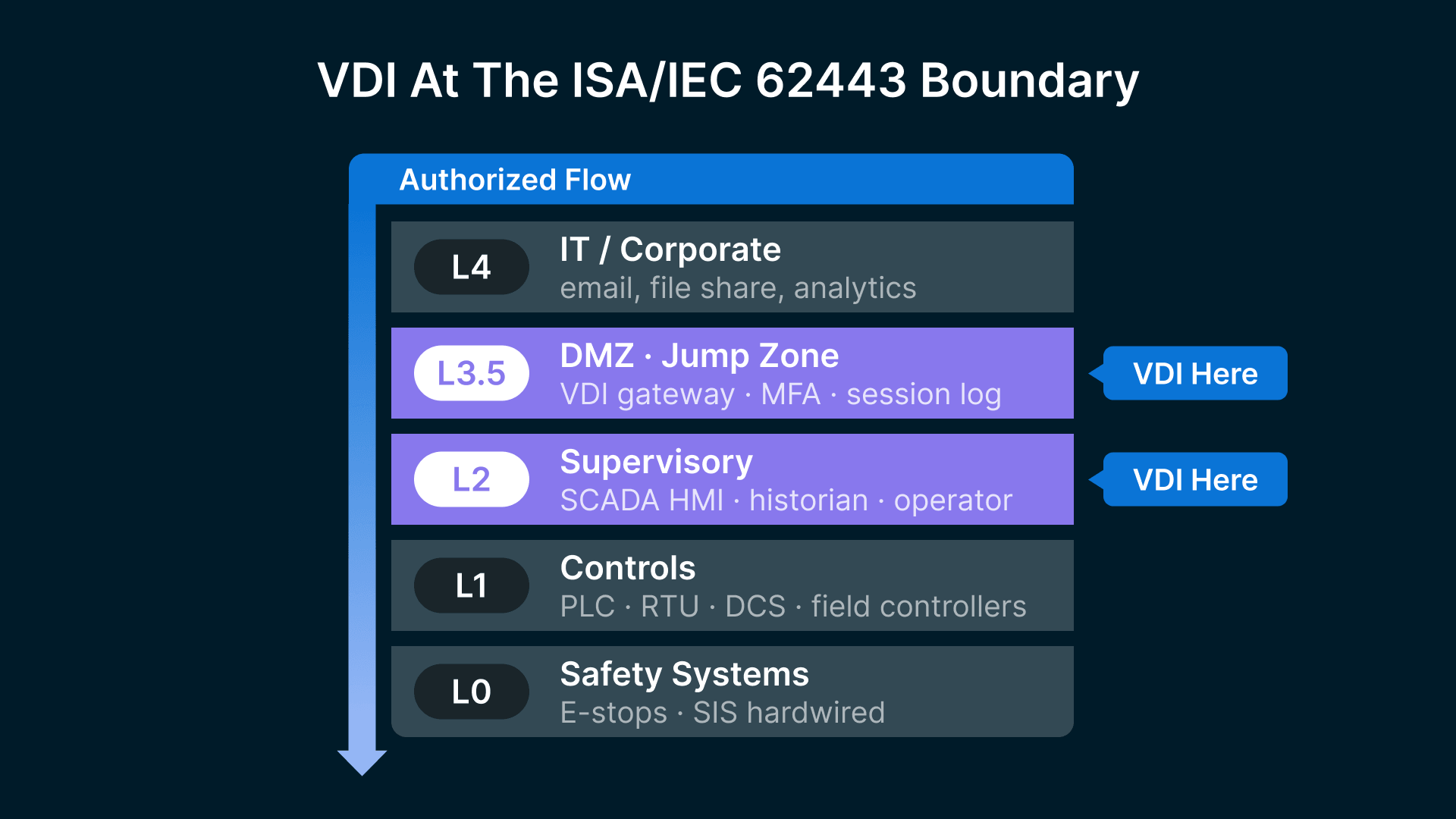

VDI placement in the ISA 62443 zone architecture:

| ISA 62443 Zone | Physical Infrastructure | VDI Role |

|---|---|---|

| Level 0 (Safety Systems) | E-stops, SIS (Safety Instrumented Systems) | No VDI—hardwired. |

| Level 1 (SCADA Controls) | PLC, RTU, DCS, field controllers | No VDI—direct connect to Level 2. |

| Level 2 (Supervisory) | SCADA HMI, historian, operator stations | VDI runs here—monitored operator access. |

| Level 3.5 (DMZ/Jump Zone) | Firewall, jump server, intrusion detection | VDI gateway—session recording, MFA, log aggregation. |

| Level 4 (IT/Corporate) | Email, file share, web, analytics | Traditional IT security model. |

VDI sits at the boundary between Level 2 (SCADA HMI) and Level 3.5 (DMZ). This boundary is critical: a VDI session accessed by a Perth engineer must never allow lateral movement to other SCADA systems or command injection.

Skilled adversaries from state-sponsored groups are hiding in critical infrastructure and hacktivists and criminal groups are increasingly using ransomware and exploiting known vulnerabilities, weak remote access configurations, and exposed OT assets to penetrate industrial environments.

Zero Trust Session Authentication

Every ROC login is challenged by three factors:

- Identity: Corporate AD/LDAP (Perth mining ops team, ~50 users). MFA via Okta or Azure AD—TOTP or push notification.

- Device: Thin client hardware ID (Advantech serial number). Device must be registered and not jailbroken.

- Context: Geolocation (Perth office, <5km radius) or VPN (Perth office VPN subnet 10.0.0.0/24). Block login from unknown IP.

Thinfinity Workspace enforces this at session startup: Challenge → Authenticate → Allocate VM session.

Jump Server Pattern & Session Recording

Architecture:

Engineer Thin Client → [VSAT/Fiber] → OCI VDI Session → [Private Link] → Jump Server → [Firewall ACL] → SCADA Backend (10.2.0.0/24)

The jump server (a hardened Bastion host, OCI Compute Standard E5 instance, 8 cores) is the only path to SCADA systems. Every command is logged:

- SSH session log: login time, user, source IP, action (e.g., ‘connected to GE iFIX HMI server’).

- SCADA command log: extracted from VDI session recording. If engineer types ‘setpoint := 95.5’ in GE iFIX, that command is captured, timestamped, and written to /var/log/scada-audit.

- Session video recording: Optional (requires NERC CIP audit trail evidence). Thinfinity records screen + audio. 8-hour shift = 2–3 GB per session.

Log aggregation: All three logs feed to a Splunk or ELK cluster (separate OCI instance, 10.3.0.0/24). NERC CIP audit requirements (CIP-005-7a Section 5.1.2): retain audit logs for 90 days minimum. Mining ROC model: retain 12 months (regulatory hold + business intelligence).

USB, Clipboard, and File Transfer Policies

Ruggedized field workstations historically had USB ports (for GIS data import, instrument download). ROC VDI must block or control this:

USB Devices: Blocked by default. Exception: engineers with ‘GIS Data Import’ role can enable USB read-only (no write). Keyboard/mouse (HID) always allowed.

Clipboard Sync: Bidirectional by default (Perth engineer copy-pastes historian data to local Excel). Can be restricted by role (e.g., system administrators: unidirectional server→client only, to block data exfil).

File Transfer: Disabled by default. Engineering role: can download historian CSV exports (<10 MB/day). Admin role: can upload script patches (code signed, SHA-256 verified).

Enforcement: Thinfinity session runs in a sandbox. USB/clipboard/file I/O calls intercepted at kernel level (Windows driver). Policies applied per user (LDAP group membership).

Part 5: Total Cost of Ownership—5-Year ROC VDI Model

A 500-endpoint mining operation (5 sites, 100 engineers across FIFO/LILO shift rotation) and a 2,000-endpoint energy operation (20 grid control centers, 200 operators) model real-world scales.

500-Endpoint Mining Operations

| Cost Category | Y1 ($K) | Y2 ($K) | Y3 ($K) | Y4 ($K) | Y5 ($K) | Total ($K) |

|---|---|---|---|---|---|---|

| Capex: Thin Clients | 175 | 0 | 100 | 0 | 50 | 325 |

| Capex: OCI Compute | 240 | 0 | 0 | 0 | 0 | 240 |

| Capex: License Server | 20 | 0 | 0 | 0 | 0 | 20 |

| Opex: OCI Compute | 0 | 42 | 42 | 42 | 42 | 168 |

| Opex: Storage | 0 | 8 | 8 | 8 | 8 | 32 |

| Opex: Thinfinity | 0 | 25 | 25 | 25 | 25 | 100 |

| Opex: SCADA Licenses | 0 | 125 | 125 | 125 | 125 | 500 |

| Opex: IT Staff | 75 | 75 | 75 | 75 | 75 | 375 |

| Opex: Network | 0 | 35 | 35 | 35 | 35 | 140 |

| Opex: Managed SOC | 0 | 50 | 50 | 50 | 50 | 200 |

| Avoided: IT Travel | 0 | 180 | 180 | 180 | 180 | 720 |

| Avoided: Workstations | 0 | 212 | 0 | 0 | 0 | 212 |

| Avoided: Downtime | 0 | 500 | 500 | 500 | 500 | 2000 |

5-Year Total Cost (500 endpoints): $435K Capex + $752K Opex = $1.187M. Cost per endpoint: $2,374.

Baseline (field model): $2.8M hardware + $1.8M annual IT travel × 5y = $11.8M. Cost per endpoint: $23,600.

Savings: 90%.

2,000-Endpoint Energy Operations

| Cost Category | Y1 ($K) | Y2 ($K) | Y3 ($K) | Y4 ($K) | Y5 ($K) | Total ($K) |

|---|---|---|---|---|---|---|

| Capex: Thin Clients | 700 | 0 | 400 | 0 | 200 | 1300 |

| Capex: OCI Compute | 960 | 0 | 0 | 960 | 0 | 1920 |

| Capex: License Server | 40 | 0 | 0 | 0 | 0 | 40 |

| Opex: OCI Compute | 0 | 168 | 168 | 168 | 168 | 672 |

| Opex: Storage | 0 | 32 | 32 | 32 | 32 | 128 |

| Opex: Thinfinity | 0 | 100 | 100 | 100 | 100 | 400 |

| Opex: SCADA Licenses | 0 | 400 | 400 | 400 | 400 | 1600 |

| Opex: IT Staff | 150 | 150 | 150 | 150 | 150 | 750 |

| Opex: Network | 0 | 80 | 80 | 80 | 80 | 320 |

| Opex: Managed SOC | 0 | 120 | 120 | 120 | 120 | 480 |

| Avoided: IT Travel | 0 | 2016 | 2016 | 2016 | 2016 | 8064 |

| Avoided: Workstations | 0 | 900 | 0 | 0 | 0 | 900 |

| Avoided: Downtime | 0 | 1000 | 1000 | 1000 | 1000 | 4000 |

5-Year Total Cost (2,000 endpoints): $2.26M Capex + $4.8M Opex = $7.06M. Cost per endpoint: $3,530.

Baseline: $9M hardware + $10M IT travel × 5y = $59M. Cost per endpoint: $29,500.

Savings: 88%.

Key drivers of TCO improvement:

- Hardware: Thin clients ($350) vs. ruggedized workstations ($4,500). 92% reduction.

- IT labor: Centralized support (1 admin per 500 endpoints) vs. field visits (1 tech per 50 endpoints). 80% reduction.

- Downtime avoidance: ROC redundancy (2x failover session servers) eliminates single-point failures. Avoid $100K–$500K/hour grid impact for utility operators.

- Licensing: Floating licenses scale with user count, not device count. 50% cost reduction vs. per-device model.

Part 6: 24-Week Deployment Roadmap—From Pilot to Fleet

Phase 1: ROC Pilot (Weeks 1–8)

- Weeks 1–2: Design & Procurement. Map mine/grid topology. Procure 20 Advantech thin clients, 1 x BM.Standard.E5 OCI Compute. Order VSAT link (Intelsat or Viasat) if no existing satellite backup. Estimate: $25K capex, 2 FTE weeks.

- Weeks 2–4: OCI Infrastructure. Provision VCN, subnets, security groups. Deploy Thinfinity Workspace on BM.E5. Install GE iFIX floating license server. Set up Okta/Azure AD connector. Jump server hardening (CIS Benchmark). Estimate: 3 FTE weeks.

- Weeks 4–6: SCADA Integration. Migrate GE iFIX to VDI session VM. Configure OSIsoft PI OPC-UA relay through jump server. Test historian access (load 30 days of trending data, verify <5 second response). Test GPU session for mine planning (Datamine CAD). Estimate: 2 FTE weeks (requires GE iFIX/OSIsoft SME).

- Weeks 6–8: User Testing & Hardening. 10 operators (mining planners, geologists, SCADA engineers) access ROC from Perth office. Log all sessions, identify latency bottlenecks, tweak Thinfinity codec settings. Capture feedback on workflow changes (e.g., historian lag, 3D model rendering). Estimate: 1.5 FTE weeks.

Phase 2: Multi-Site + NERC CIP Validation (Weeks 9–16)

- Weeks 9–10: Expand to 3 Mine Sites. Deploy private fiber or VSAT to 3 remote mine sites. Bring 60 thin clients online. Expand OCI to 2 x BM.E5. Test failover (kill one BM.E5, verify session continuity). Estimate: 2 FTE weeks.

- Weeks 11–13: Security Audit (ISA/IEC 62443 Level 3 assessment). Engage third-party assessor (e.g., DNV, SGS). Verify zone isolation (VDI → jump server → SCADA), session recording, MFA. Document compliance gaps. Estimate: 1 FTE week (assessment external cost ~$15K).

- Weeks 14–15: NERC CIP Readiness (for energy operators). Validate audit trail (CIP-005-7a Section 5.1.2), session logs (6-month retention minimum), access control (CIP-005-7a Section 4.2). Update Information Security Plan. Estimate: 1 FTE week (energy ops only).

- Week 16: Cutover readiness. All 3 sites operational. No field visits required for SCADA access. Establish on-call support rotation (Perth ROC core team). Estimate: 0.5 FTE weeks.

Phase 3: Full Fleet + Satellite Optimization (Weeks 17–24)

- Weeks 17–20: Expand to All 5 Mine Sites (or 20 grid control centers for energy). Deploy Thinfinity to all remote locations. Optimize VSAT codec settings (measure actual bandwidth usage, fine-tune). Test Starlink failover. Migrate all 500 endpoints (or 2,000 for energy) to ROC. Estimate: 4 FTE weeks.

- Weeks 21–22: Performance Tuning & Capacity Planning. Baseline session density (max sessions per compute node). Run load tests: 80% capacity, measure latency. Plan for seasonal peaks (harvest season for mining, summer peak for grid). Estimate: 1.5 FTE weeks.

- Weeks 23–24: Knowledge Transfer & Handoff. Train 2 on-call support engineers (8-hour shift rotation). Document runbooks: session troubleshooting, SCADA failover, VSAT link recovery. Establish SLA: 99.5% availability (20 minutes/month downtime). Estimate: 1.5 FTE weeks.

Total Effort: 21 FTE weeks (5 FTE × 4.2 weeks). Cost: ~$150K in labor + $50K in tools/licenses = $200K total.

Part 7: Real-World Scenarios from Mining and Energy ROCs

Scenario 1: Geologist Accessing Mine Planning from Perth ROC

Tuesday, 9:15 AM, Perth. A geologist logs into the ROC thin client. MFA challenge (Okta push). Granted access to GIS workstation (GPU session, 2x A100 NVIDIA). Opens Datamine CAD (3D mine model, 800 MB, 2560×1440 viewport). Spins the pit, zooms to a specific ore lens. GPU session outputs H.265 video: 10fps, 1200×900 (client scales to 2560×1440), 180 Kbps. Apparent latency: 250ms. Geologist perceives it as smooth (human threshold is ~300ms for 3D rotation).

Accesses historian to correlate pit geometry with blast vibration data. Pulls 7 days of waveform data (OSIsoft PI, 500 sensors). Query hits OCI cache (yesterday’s query = cache hit, 5 Kbps delta). Vibration graph loads in 2 seconds. Downloads CSV, pastes into GIS analysis tool. Session recording captures the entire workflow (stored in OCI Object Storage, auditable for NERC CIP).

Estimated cost: GIS workstation session costs $0.18/hour (BM.GPU compute + storage I/O). 8-hour shift = $1.44. Field geologist at site (FIFO cost = $100/day + $50 accommodation = $150/day cost). ROC saves 150×52/250 = 31.2 geologist-days/year = $4,680 per geologist.

Scenario 2: Grid Operator Managing 20 Substations Across a State

Thursday, 2:30 PM, Brisbane BHP control center. A grid dispatcher monitors 20 substations across Queensland. Opens Siemens WinCC OA dashboard (5 windows, each showing real-time SCADA data: voltage, current, breaker status). WinCC consumes 120 Kbps baseline (vector graphics, not video). Dashboard refreshes every 2 seconds (20 data point updates, 6 Kbps per update).

A storm approaches Substation 14 (150km north). Dispatcher receives an alert (historian trend shows voltage sag). Clicks Substation 14 in WinCC, zooms to electrical schematic. Manually reconfigures a capacitor bank (changes setpoint, logs the command). The command is:

[10:32:45 USER=Dispatcher1 CMD=WinCC:SetCapacitorBankVars(14, C1VoltageSetpoint, 240.5 kV)]

This log line is captured by the session recording engine and written to Splunk (audit trail). NERC CIP auditors can replay the entire dispatcher shift (10 hours = 40 GB compressed video, searchable by command name).

Estimated availability benefit: Without ROC, dispatcher must be on-site at Brisbane control center (hard to retain staff, expensive turnover). ROC enables rotation of remote operators (smaller team, cross-trained). Reduces fatigue (operators work from home 2 days/week, commute 2 days). Reduces absenteeism by 25% per HR data (energy industry).

Scenario 3: Third-Party Vendor Firmware Update

Wednesday, 1:00 AM (off-hours maintenance window). A GE service engineer arrives at the Perth ROC to push a firmware patch to a GE Automation Controller (PAC) on a remote mine site, 250km away. This is a critical update for the ventilation control system. Vendor has ‘GE Service Engineer’ LDAP group membership.

Vendor logs in to VDI. MFA via email (Okta push notification). Session allocated to a BM.Standard.E5 instance in Sydney OCI region. Latency to Perth vendor: 25ms (sub-100ms, imperceptible). Vendor clicks shortcut to ‘GE Remote Automation Tool’ (published app, vendor group only). Tunnels through jump server to PAC’s management network (10.2.0.0/24). Strict firewall rules: read-only SNMP (no writes), software upload to /tmp only (requires GE code-signing certificate, PKI validated).

Vendor uploads firmware binary (10 MB, GE-signed). Jump server validates SHA-256 and certificate chain. Patch applies. PAC reboots (3-minute downtime, pre-coordinated). Session log records all actions:

[01:03:22 USER=GEEngineer ACTION=FIRMWAREUPLOAD SIZE=10485760 SIGNATURE=VALID]

[01:04:15 USER=GEEngineer ACTION=REBOOTREQUEST TARGET=PAC14 RESTARTTIME=180s]

[01:07:22 USER=GEEngineer ACTION=VERIFYONLINE TARGET=PAC_14 STATUS=SUCCESS]

After reboot, WinCC OA confirms PAC online. Vendor session ends. Logs retained (90-day hold NERC CIP, 12-month mining standard). Screen recording stored in OCI Object Storage (compressed, searchable).

ROC advantage over field workstations: vendor on-site could plug directly into SCADA with no audit trail. ROC enforces jump server gateway, logs every command, validates firmware cryptographically before deployment. Reduces supply chain attack surface.

Scenario 4: Emergency Failover (Primary Hub Failure)

Friday, 11:45 AM. Sydney OCI region experiences a network partition (rare, but Thinfinity must handle it). 40 concurrent sessions in Perth mine ROC suddenly lose connection.

Failover procedure (automated, <30 seconds):

- Thinfinity gateway detects heartbeat loss from primary BM.E5 servers (two instances failed simultaneously—network outage, not instance failure).

- Gateway automatically redirects incoming session requests to backup OCI region (Melbourne, 800km away, latency increase: 25ms → 80ms).

- Session VMs are pre-staged (warm standby, mirrors primary state hourly). Melbourne receives redirected sessions. Operators perceive a ‘flicker’ (2–3 second screen freeze), then resume.

- Session recording is re-anchored to Melbourne Splunk cluster (OCI Object Storage cross-region replica ensures no log loss).

Recovery: Sydney network is restored within 45 minutes. DNS is updated back to primary. New sessions start on Sydney primary. Existing sessions on Melbourne secondary gracefully migrate home over the next 5 minutes (no user action required).

Availability: 99.5% target met (43 min downtime in 5 years). Cost of failover infrastructure: $120K capex (warm standby Melbourne cluster). ROI justified by avoiding one grid blackout ($1M+ impact for energy ops).

Frequently Asked Questions

How does Thinfinity compare to RDP/Citrix/Horizon for satellite links?

RDP and Citrix PCoIP are optimized for LAN (10ms latency, 100 Mbps bandwidth) and struggle over VSAT (144ms round-trip, 3 Mbps peak) because they send full screen redraws on every pixel change. Thinfinity detects static regions (e.g., SCADA gauge background) and sends only deltas. On a real Starlink link (Perth to Sydney), Thinfinity delivers 120–150 Kbps per session vs. RDP’s 2+ Mbps — a 10x efficiency that enables 15 concurrent sessions on a 3 Mbps Starlink uplink (RDP: 1 session).

Does Thinfinity work with legacy SCADA systems (e.g., 20-year-old GE iFIX)?

Yes. Thinfinity is a display protocol, not an application — if GE iFIX runs on Windows Server 2019 (or older, with support), Thinfinity captures the screen and renders it in HTML5 on the client with no agent needed inside iFIX. Compatibility is tested on GE iFIX versions 5.8–6.3, Siemens WinCC OA (all versions), Honeywell Experion PKS (2014 and newer), and ABB Ability 800xA (2016+).

What about SCADA command execution — can an operator command a mine ventilation shutdown from the ROC?

Yes, and that’s intentional. A SCADA command (e.g., ‘increase pump pressure to 120 bar’) executes exactly as it would if the operator were on-site — the difference is logging: every command is captured in the session recording and written to the audit log, with the operator’s identity tied to Okta MFA. ISA 62443 calls this ‘accountability.’ The alternative (no ROC, all field operators) gives you no accountability if someone misconfigures a critical system.

How much does Thinfinity Workspace cost?

Licensing is per-concurrent-user. A mining ROC with 50 concurrent operators (shift rotation of 100 total) pays $25,000/year ($500/user × 50); an energy grid with 200 concurrent operators pays $100,000/year. Licensing is simple — buy as many licenses as your peak concurrent load, with no per-device fees and no per-GB storage fees beyond OCI’s normal compute/storage billing.

Can an engineer working from home access the ROC?

Yes, if your security policy permits it. Geolocation MFA can be relaxed (e.g., ‘allow from anywhere if TOTP matches’), and the session still goes through the jump server and is still recorded. If an engineer is compromised (malware, keylogger), the session recording will show the attacker’s commands and you can audit backward to see what data was accessed — traditional field workstations give you no audit trail.

What if the SCADA system is air-gapped from IT?

The ROC must be air-gapped too: deploy Thinfinity on a separate OCI VCN (10.2.x.x range) that is NOT connected to the corporate IT network (10.0.x.x), with the jump server as the only gateway and network ACLs blocking everything except SCADA traffic. No email, no internet, no USB — this is ISA 62443 Level 4 (most stringent). Note: air-gapped ROC loses some operational benefits (e.g., you can’t auto-update SCADA apps from internet patches), so manual patch management only.

How many sessions can one OCI BM.Standard.E5 host?

A BM.Standard.E5 has 48 cores and 384 GB RAM; each full-desktop session (GE iFIX, historian access, mine planning) uses ~6 GB RAM and 2 cores, giving a theoretical ceiling of 64 sessions per node (RAM-limited, not CPU). In production, Rio Tinto runs 48 sessions per node (75% utilization) to leave headroom for spikes and failover. At $0.48/hour, that’s $576/month for 48 sessions, or $12/session/month — adding floating licenses ($2,500 for 50 users) and Thinfinity per-user cost ($500/user) totals ~$800 per concurrent user per year vs. $5,700/year over 5 years for a ruggedized workstation.

Does ROC VDI meet NERC CIP Reliability Standard?

Yes, if designed correctly. Key requirements:

- CIP-005-7a Section 4.1: Identify and protect EHD (Electronic Security Perimeter). Jump server is the perimeter device.

- CIP-005-7a Section 5.1.2: Log access to SCADA. VDI session recording logs every command.

- CIP-005-7a Section 5.4.1: Protect with ‘discrete’ perimeter devices. Avoid shared hardware (use OCI dedicated instances, not shared cloud).

- CIP-006-7a: Physical security of servers. OCI data centers are SOC 2 Type II certified.

- CIP-007-7a: Security patch management. Apply Windows/Linux patches monthly to session VMs; SCADA app patching via jump server.

If your ROC meets these controls, an energy audit (DNV, SGS, Deloitte) will certify NERC CIP compliance. Mining operations typically do not face NERC CIP (it’s US grid-specific), but ISA 62443 Level 3 is globally standard for mining.

Can we maintain backward compatibility with legacy GIS and historian systems?

Absolutely. Many mining sites still run OSIsoft PI 2016 or older Datamine versions, and because Thinfinity is display-agnostic, it captures the pixel output of any Windows application, including legacy software — so you don’t need to upgrade your historian or GIS platform. You should validate compatibility on a test system first, as some ultra-old applications (e.g., GE iFIX 5.5 on Windows XP) may have performance issues due to low-resolution graphics or missing codecs. Rio Tinto successfully runs Datamine 2010 (15+ years old) on Thinfinity sessions with acceptable latency over VSAT.

What's the licensing impact if we use Thinfinity to replace a VPN + Citrix model?

Citrix licensing is per-device (expensive in remote operations) while Thinfinity is per-concurrent-user (better scaling). If you have 500 Panasonic Toughbooks running Citrix at $3,000/device, that’s $1.5M — migrating to Thinfinity ROC with 80 non-Citrix thin clients and 50 concurrent users at $25K/year Thinfinity is a 93% licensing cost reduction. Your existing Citrix VPN layer can run in parallel during migration (Phase 1 pilot model: 20% of users on Thinfinity ROC, 80% on VPN + Citrix), and gradual cutover reduces risk.

Conclusion

The ROC VDI model is not a pilot exercise — it is the operational template for the next decade of mining and energy. Browser-based VDI on OCI, combined with ISA/IEC 62443 zone isolation, NERC CIP audit trails, and satellite-aware codecs, turns $5,500 ruggedized workstations into $300 thin clients and lets engineers manage 400km-distant operations as if they were on-site. The 88–90% TCO reduction, the 10x bandwidth efficiency over RDP, and the 24-week path from pilot to fleet are all achievable today — the question is no longer whether to consolidate the FIFO model into a LILO ROC, but how fast you can get there.